Welcome to AI Foundations Course

This comprehensive course explores the key concepts needed to build production-grade AI applications. Through a combination of theoretical foundations and practical applications, you'll build the skills necessary to understand and create AI-powered solutions.

Course Objectives

Ensure all team members—scientists, data engineers, and software engineers—have a strong, common understanding of modern AI/LLM concepts, terminology, and best practices.

Why? This enables more effective collaboration, clearer communication, and faster consensus when designing, reviewing, or iterating on AI architectures.

Equip the team with the knowledge and practical skills needed to design, build, and deploy AI-powered applications that directly address business needs.

Why? This bridges the gap between technical capability and business value, ensuring our solutions are relevant and impactful.

Develop the ability to accurately estimate timelines, resource needs, and technical risks for AI projects. Empower team members to communicate requirements, dependencies, and trade-offs clearly to product managers and stakeholders.

Why? This leads to more predictable delivery, better alignment with business priorities, and fewer surprises during execution.

Integrate and adopt AI-powered coding tools (e.g., AmazonQ, Cline, Cursor etc.) into daily workflows to boost productivity and code quality.

Why? Leveraging these tools allows us to focus on higher-level design and problem-solving, while reducing manual effort and boilerplate.

Encourage team members to experiment with new prompting techniques, architectures, and evaluation methods. Share learnings and best practices across the team to continuously raise the bar for AI application quality and innovation.

Why? The AI field is evolving rapidly; a culture of curiosity and sharing ensures we stay ahead and adapt quickly.

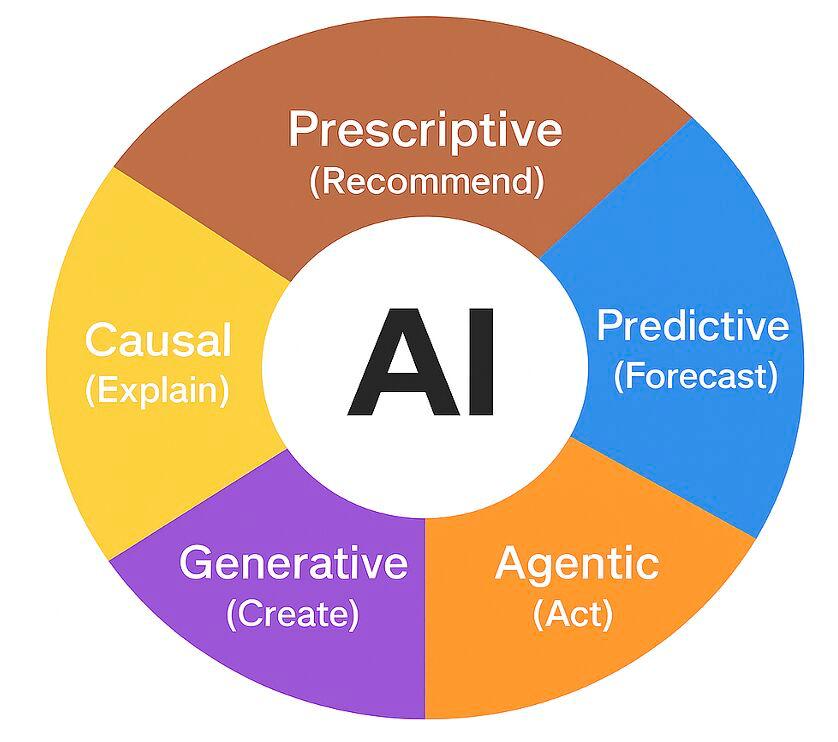

Flavors of AI

AI is not a single technology — it spans a spectrum of capabilities and purposes. Understanding these flavors helps clarify what today's systems can and cannot do, and where the field is heading.

| Flavor | Question it answers | Examples |

|---|---|---|

| 🟠 Predictive | What will happen? | Fraud detection, demand forecasting, recommendations |

| 🔵 Prescriptive | What should we do? | Dynamic pricing, supply chain optimization, treatment plans |

| 🟡 Causal | Why did it happen? | A/B test analysis, root cause analysis, scientific discovery |

| 🟣 Generative ★ | What can be created? | Claude, ChatGPT, DALL·E, Amazon Nova, Sora |

| 🔴 Agentic ★ | What actions should I take? | AI coworkers, autonomous research agents, software agents |

★ This course focuses on Generative and Agentic AI — the two flavors driving today's wave of AI application development.

The Evolution of Artificial Intelligence

AI has evolved through the convergence of algorithmic breakthroughs and advances in computing infrastructure. From John McCarthy's 1956 Dartmouth workshop to today's agentic systems — here's how we got here.

The Birth of AI

Machine Learning Era

AlexNet & the Deep Learning Moment

"Attention Is All You Need" — The Transformer

The ChatGPT Moment — GenAI Goes Mainstream

Multimodal AI & Cloud Platforms

Agentic AI & The Coding Inflection Point

Course Structure

This course is designed specifically for scientists, software and data engineers. Through a carefully structured learning path, you'll gain both theoretical knowledge and practical skills needed to build production-grade AI applications.

Part 1: Foundational Concepts

Master the core concepts of modern AI development. Starting from fundamentals, you'll progressively build knowledge of language models, prompt engineering, AI agents, embeddings & RAG, and MCP — the essential building blocks for creating AI applications on AWS.

Key Outcomes:

- Understand how LLMs work under the hood

- Master effective prompt engineering

- Build and evaluate agentic LLM applications

- Connect LLMs to your data with embeddings and RAG

- Understand the what, why, and how of MCP

Introduction to Large Language Models

Learn about the architecture, capabilities, and limitations of Large Language Models. Understand the fundamental concepts behind these powerful AI systems that are driving innovation across industries.

Prompt Engineering Guide

Master the art of effectively communicating with AI models through carefully crafted prompts. Learn strategies and techniques to get the most accurate and useful responses from language models.

Agentic LLM Applications

Discover how agentic LLM applications extend language models with memory, tool use, and iterative decision cycles to autonomously solve complex, multi-step tasks. This module covers how agentic LLM applications go beyond single-step or workflow-based applications to deliver adaptable, goal-driven AI solutions.

Embeddings & Retrieval-Augmented Generation (RAG)

Understand how embeddings encode semantic meaning, how vector stores enable similarity search, and how to build RAG pipelines that give LLMs access to your private data — without fine-tuning. Covers Amazon Titan Embeddings, OpenSearch, and AWS Bedrock Knowledge Bases.

Developer's Guide to Model Context Protocol (MCP)

Learn how to use MCP to build robust, maintainable AI applications. Understand the core principles of MCP and how it enables standardized communication between AI models and tools.

Part 2: Building AI Applications

Part 1 gave you the concepts. Part 2 is where you build. Each module introduces a real tool or practice, and the part culminates in a capstone project — a working chat agent for this course, built on AWS using everything you've learned.

Key Outcomes:

- Build agents using Amazon Bedrock, AgentCore, and Strands SDK

- Use Spec-Driven Development with Kiro to go from spec to working code

- Monitor, evaluate, and guard AI applications in production

- Ship a real RAG-powered chat agent using AWS-native services

AWS AI Building Blocks

Get hands-on with the AWS services that power production AI applications. Covers Amazon Bedrock (foundation model API, model selection), Bedrock Agents (action groups, knowledge base integration), AgentCore Runtime and Gateway, and the Strands SDK for building agentic applications on AWS.

Spec-Driven Development with Kiro

Learn to build AI applications the right way — starting from a spec. Kiro is Amazon's AI-powered developer tool that supports both vibe coding and structured spec-driven development. This module covers the Kiro CLI and IDE, how to write effective specs, and how spec-driven workflows lead to better, more maintainable AI-powered code.

LLMOps: Monitoring, Evaluation & Guardrails

Understand the operational side of AI applications. Learn how to trace and monitor agent behavior with CloudWatch, evaluate output quality, manage prompt versions with Bedrock Prompt Management, and enforce safety boundaries with Bedrock Guardrails. What you learn here applies directly to the capstone project.

Capstone: Build the Course Chat Agent

Put it all together. Crawl and index this course's content into a Bedrock Knowledge Base, build a Strands SDK agent grounded in that knowledge, expose it through AgentCore Gateway as an MCP endpoint, instrument it with LLMOps tooling, and write the whole thing spec-first using Kiro. The result: a working AI assistant that knows this course, deployed on AWS.